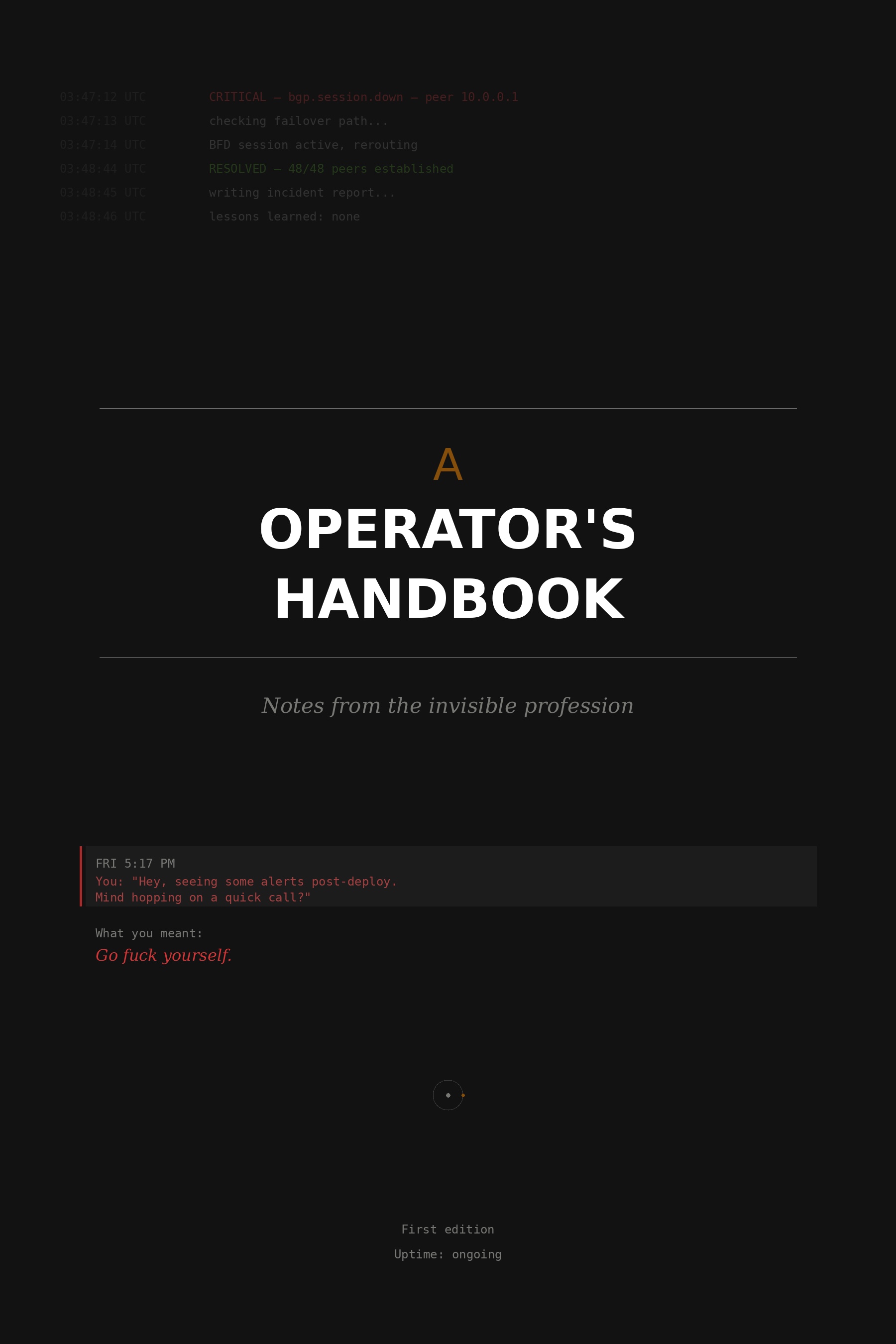

An Operator's Handbook

Notes from the invisible profession

Download PDF ↓

Part I — First light

The first page

On the night the phone became a leash

The phone sits on the nightstand for the first time, and it changes the room. Not physically—it is the same phone that was there yesterday, when it was just a phone. But tonight you are on call, and the phone is no longer a phone. It is a leash. It is a tether to a system you do not fully understand, operated by users you will never meet, running on hardware you have never touched in a building you have never entered. And if that system fails—tonight, on your watch—the phone will tell you, and you will be expected to fix it.

Your manager said "you'll learn what normal looks like." Normal. The most important word in operations, and the one that appears in no job description. Not good, not optimal, not correct—normal. The baseline hum of a system that is doing what it was designed to do, plus the small deviations that it was not designed to do but has been doing for so long that they have become indistinguishable from intent. You do not yet know the hum. You will learn it the way you learn a lover's breathing—not by studying it, but by lying next to it, night after night, until the absence of it wakes you.

You stare at the alert. Eighty-nine percent. Is that bad? The runbook says to investigate above ninety. You are one percent below the threshold for action and one hundred percent above the threshold for sleep. This is your first lesson: the alert is not the problem. The alert is a claim about the problem, made by a monitoring system that was configured by a person who is not you, according to thresholds that were chosen for reasons that may or may not still apply, and your job is not to trust the alert or to ignore the alert but to evaluate the alert, which requires a model of normal that you do not yet possess.

You check the disk. Log files. A service writing debug logs to a directory that nobody is rotating because nobody knows the debug logging is enabled because it was turned on six months ago during an incident and never turned off. You find this out by reading the config file, which has a comment next to the debug flag that says TODO: disable after incident. The TODO is dated March. It is September.

This is the first shape of operations work: not building the thing, but finding the gap between the thing as intended and the thing as it exists. The gap has a name. It is called drift, and it is the natural state of every system that is maintained by humans, which is to say every system.

You disable the debug logging. You set up the log rotation. You close the alert. You do not go back to sleep, because the adrenaline has nowhere to go and your body does not yet know that this was a small thing. Your body will learn. In six months, you will glance at a disk warning and know, from the host name alone, whether it requires action or patience. In a year, you will feel the difference between a real alert and a noisy one the way a parent feels the difference between a hurt cry and a tired cry. But tonight you are new, and everything is loud, and the phone is on the nightstand, and the system is talking to you for the first time, and you do not yet speak its language.

You are listening. That is enough. That is where it starts.

You'll learn what normal looks like.

— your first manager, week one, the only useful thing she said

The map is not the territory

On the archaeology of documentation

The network diagram was last updated by someone named Kevin. You have never met Kevin. Kevin left fourteen months ago, and his diagram—a Visio file on a SharePoint site that requires a VPN that uses a certificate that expired in Q2—is the only map you have of the system you are now responsible for.

You find the diagram anyway, because operators are archaeologists. You open it and see a network that does not exist. It existed once—probably around the time Kevin drew it—but the system has evolved since then in ways that Kevin did not anticipate and that nobody documented because the person who made the change was not Kevin and did not know about Kevin's diagram and would not have updated it if they had.

There is a switch in the diagram that was decommissioned in January. There are two links drawn between buildings that share a single fiber path, because Kevin drew the logical topology and someone else built the physical one and nobody reconciled the difference. There is a firewall labeled "FW-01" that is actually the third firewall to hold that name, because the naming convention tracks the role and not the hardware, and the previous two are in a closet somewhere, waiting to be asset-tagged and recycled.

The first law of operations: the documentation describes the system that was intended, not the system that exists. The system that exists is the documentation plus every undocumented change, workaround, hotfix, and "temporary" adjustment applied since the documentation was written. The operator is the bridge between the map and the territory.

You start redrawing. Not because anyone asked you to—nobody will ask you to, nobody asks for documentation the way nobody asks for insurance until the house is on fire—but because you cannot operate a system you cannot see, and the only way to see it is to build the picture yourself, cable by cable, route by route, from the living system and not from Kevin's memory of what it was supposed to be.

It takes three weeks. When you're done, you have a diagram that is accurate as of today and will begin drifting from reality tomorrow. You put it on the wiki. You tell yourself you'll keep it updated. You will try. You will mostly succeed. And someday you will leave, and someone will open your diagram and find a network that does not quite exist anymore, and the cycle will begin again. This is not a failure of documentation. It is the nature of living systems: they move faster than any description of themselves.

Your diagram will be the best one they've had. It will still be wrong. Both things will be true.

The map is not the territory, but the operator who has walked the territory is the map.

— scrawled on a whiteboard in the NOC, erased by facilities during a weekend deep clean

The weight of green

On the invisibility of competence

Forty-eight of forty-eight peers established. All green. The dashboard is a wall of quiet confidence, every indicator reporting that nothing, anywhere, is wrong. This is your masterpiece. Nobody will see it.

The quarterly review slides go up. Sales: revenue numbers, win rates, pipeline growth. Marketing: campaign performance, lead generation, brand metrics. Engineering: features shipped, bugs closed, deployments completed. Operations: [slide not included]. Not because your work doesn't matter. Because your work, when it goes well, produces no data. No incidents means no incident metrics. No outages means no recovery time statistics. No failures means no "lessons learned" to present in a blameless format to a room of people who are secretly relieved they weren't involved.

Operations slide: 99.97% uptime. Zero Sev-1 incidents. Audience: polite nods.

Your manager asks you to "quantify your team's impact." You stare at the email. You could measure uptime—99.97% this quarter—but uptime is expected, not celebrated. You do not get credit for the building not falling down. You could count the incidents that didn't happen: the capacity headroom you maintained, the patches you applied before the CVE went public, the config change you caught in review that would have black-holed a /16 in production. But preventing incidents is not a measurable activity in any framework your organization uses. Prevention is the absence of data. You are asking them to see a hole in the shape of a disaster that never occurred.

The weight of green is the weight of proof you cannot provide. Every green indicator is a claim: this system is healthy because someone is maintaining it. But the claim is indistinguishable from the null hypothesis: this system is healthy because it doesn't need maintaining. And you cannot, from the outside, tell the difference. That is the operator's paradox. The better you are, the less evidence there is that you exist.

You write the slide anyway. You put 99.97% in large font, because someone told you that executives respond to large font. You add a bullet about the zero Sev-1 record. You add a second bullet about the capacity planning work that ensured the Black Friday traffic surge was handled without intervention. You do not add a third bullet that says the reason nothing broke is that I spent three hundred hours this quarter making sure nothing would break, because that bullet sounds like a complaint rather than an accomplishment, and the line between the two is the line between getting budget and getting cut.

The dashboard is green. The slide is ready. The meeting will last twelve minutes and your section will take two of them. That's the weight. Not the work itself—the work is fine, the work is the point—but the gap between the weight of the work and the weight of the recognition. You carry both. One in each hand. They have never balanced. They were never going to.

All systems nominal.

— the most expensive sentence in operations, delivered free of charge, every fifteen minutes

Part II — The cycle

Not this again

On the half-life of institutional memory

The meeting invite arrives on a Tuesday. Subject: Infrastructure Cost Optimization — Q3 Planning. You know what this meeting is before you open it. You have been to this meeting before. Not this specific meeting—this specific meeting is new, organized by a director who joined six months ago, who has ideas, who has been reviewing the cloud spend dashboards with the fresh eyes of someone who has never been woken at 3 a.m. by the consequences of the thing he is about to propose cutting.

You click into the attached deck. Slide 1: a bar chart showing monthly infrastructure costs trending upward. Slide 2: a breakdown by service, with the redundant links highlighted in yellow—highlighted in yellow—as if the color itself were an argument. Slide 3: a bullet point that reads "Potential savings: $184K/yr by consolidating to single-path connectivity in non-critical regions." You read the words non-critical regions and your jaw does a thing it has learned to do, a small tightening that is not quite clenching, that exists in the space between professional composure and the urge to forward this deck to the last three post-mortem reports that explain, in detail, what happens when a region that was classified as non-critical turns out to be the one carrying the traffic that the CEO's demo depends on.

You have seen this deck before. Not this deck. This species of deck.

Every organization has a metabolic cycle for bad ideas. The idea is proposed, evaluated, rejected for good reasons, and then forgotten—not by the systems it would have affected, but by the people who approved the rejection. Eighteen months later, a new person finds the same number on the same dashboard and has the same insight, and the cycle begins again. The operator is the only fixed point. The operator is the institutional memory that the institution does not know it has.

You pull up the ticket history. Not because you need to—you remember—but because you have learned that memory, unaccompanied by evidence, is dismissed as resistance to change. The tickets are there, stacked like geological strata, each one a fossil of the same argument petrified at a different depth.

Five tickets. Three years. Two outages. Two rejected proposals to do the exact thing that caused the outages. And now a third proposal, from a third person, who was not here for any of it. You could send these tickets to the new director. You have, in the past, sent tickets like these to people like him. You know how it goes. He will read them, or he will not. If he reads them, he will note that the outages occurred under a previous architecture, and suggest that improvements since then have changed the risk profile. If he does not read them, he will arrive at the meeting with the confidence of someone who has done the math and found savings, and you will be the person arguing against savings, which is an argument that has never once, in the history of corporate infrastructure, sounded like anything other than an engineer being precious about her design.

The operator's dilemma is not technical. It is rhetorical. The case for redundancy is a proof by contradiction—you cannot show the value of the thing that prevented the disaster, because the disaster did not happen, because the thing was there. You are asking people to pay for the absence of evidence.

You go to the meeting. Of course you go to the meeting. You bring the ticket numbers, printed on a single sheet, because you learned long ago that a screen share loses the room but a piece of paper holds it.

The director presents. He is good at it. The deck is clean, the numbers are correct, and the logic is airtight in the way that logic is airtight when the model excludes the variable that matters. He says single point of failure once, early on, as if to acknowledge the counterargument and move past it. He says acceptable risk twice. He says industry standard, which means he has read a blog post by a company whose infrastructure is nothing like yours but whose name is impressive enough to borrow.

"Industry benchmarks suggest single-path is sufficient for Tier 2/3 regions with <5% of total traffic."

When you speak, you are brief. You say that Region B was classified as Tier 3 in 2019 and was carrying 11% of production traffic when its single path failed. You say that the classification system has not been updated since 2021 and that three services have since been migrated to regions that are still labeled non-critical. You place the sheet of paper on the table and say that the ticket history shows two previous attempts to make this same change, both rejected after incidents that cost more in engineering hours than the proposed savings.

"The savings are real. The risk model isn't. We're pricing redundancy against a classification system that's two years stale."

The director nods. He is a reasonable person—most of them are, that's what makes this hard. He didn't propose cutting redundancy because he's reckless. He proposed it because the data he had was incomplete, because the institutional memory that would have completed it lives in your head and in a stack of closed tickets that nobody reads proactively, because the organization's immune system against repeated mistakes is a single engineer who happens to remember.

This is the thing about institutional memory: it doesn't live in the institution. It lives in people. When the person leaves, the memory leaves with them, and the organization discovers this eighteen months later, when someone proposes the thing again and there is no one in the room who winces.

The meeting ends with a compromise. The director will update the region classification before any changes are made. You will provide the traffic data. It is the right outcome. It is also the same outcome as last time.

"After reviewing incident history and current traffic distribution, the committee agreed to defer the consolidation proposal pending a full region reclassification exercise."

"After reviewing incident history and current traffic patterns, the committee agreed to defer the consolidation pending an updated region classification."

"After reviewing incident history and current traffic distribution, the committee agreed to defer…"

You could write a design document. You have thought about this. A proper architectural decision record that explains, once and for all, why the redundant paths exist. You could write it well. You could put it in the wiki, in the section on network architecture, between the diagram that is eighteen months out of date and the onboarding guide that references a VPN concentrator you decommissioned in 2022.

You know what will happen to the document. It will be read by the three people who already agree with you. It will be linked in one Slack thread, praised, and then never opened again. The next director will not find it because the next director will not look for it. He will find the dashboard, the bar chart, the yellow highlights. He will schedule a meeting. You will get the invite on a Tuesday.

The operator does not fight entropy. The operator is the local reversal of entropy, a pocket of order sustained by attention and memory in a system that trends, always, toward forgetting. The work is not to stop the cycle. The work is to be present when it comes around again, to say the same true thing in the same patient voice, and to know that the patience is the point.

You walk back to your desk. The dashboard is green. Forty-eight of forty-eight peers established. The redundant paths are there, carrying no traffic, costing $184,000 a year, doing exactly what they are supposed to do, which is nothing, until the moment they do everything.

You'll be here next time. You're always here next time. That's the job.

The document exists. The tickets exist. The post-mortems exist. The problem was never a lack of documentation. The problem is that organizations don't read—they rediscover.

— annotation in a margin of a design doc, author unknown, last modified 2021, last viewed 2021

Screaming into the void

This isn't the first, nor likely to be the last

You know before you're fully awake. Not the specifics—not yet—but the shape of the thing. The phone buzzing on the nightstand has a particular cadence when it's PagerDuty versus everything else, and your nervous system learned to distinguish them sometime in your second year on-call.

This isn't the first time. The thought arrives without drama, the way a bus driver might note another red light. You've seen this peer drop before—twice in the last eighteen months, both times a carrier issue on the long-haul span through Pennsylvania. You already know the playbook because you wrote the playbook.

bgp.peers: 47/48

failover: BFD <1s

traffic.rerouted: yes

customer.impact: negligible

You check anyway. You always check. Not because you doubt the system but because the ritual is the only thing that separates competence from complacency, and the distance between those two words is the distance between sleeping through the night and explaining to a VP why a region went dark.

The experienced operator does not fear the novel failure. She fears the familiar one—the one she has solved so many times that she might, one night, trust the automation and roll over. That is the night it will be different.

There is a tally you keep. Not written down—nothing so formal—but carried in the body like a boxer's count of rounds. You know the feel of each one the way a sailor knows swells: this is a two-footer, this is a six-footer, this one has an undertow.

Fourteen times. Fourteen times you've opened a terminal for this specific neighbor address. One of them, the third, was the incident that taught you what a routing loop looks like from inside: traffic circling between two ASes like water in a drain, TTLs decrementing to zero, packets dying of exhaustion while you and an engineer at the other carrier talked through the problem on a bridge call at 2 a.m. with the muted patience of two people who understood that anger would not converge the routes faster.

You resolved it. You wrote the post-mortem. You added a rule to the route policy. And then, eight weeks later, the same peer dropped for a completely different reason, and the only evidence that you had ever solved anything was the fact that this particular failure mode was not the one you were looking at.

Operations is not a linear narrative. There is no climax, no resolution, no third act. Operations is a cycle, and the operator's skill is not in breaking the cycle but in becoming so fluent within it that each revolution takes a little less from you. This is what mastery looks like: not transcendence, but efficiency of suffering.

The session re-establishes at 03:48. Twelve thousand prefixes reload in under two seconds.

You will write the incident report in the morning. Root cause: carrier fiber cut. Impact: negligible. Remediation: none required. Lessons learned: none. That last field is the one that haunts you. Lessons learned: none. Not because there's nothing to learn, but because you already learned it. The lesson was implemented. And here you are again, filling in the same form, because a backhoe in Pennsylvania doesn't read your post-mortems.

There is a particular cruelty in being asked what you learned from an experience you already mastered. It is the organizational equivalent of asking a surgeon what she learned from the last time she washed her hands.

The repetition is the thing nobody warns you about. They want a hero's journey: the call to adventure, the ordeal, the return with the elixir. What they get is someone who has internalized that the elixir expires and the dragon respawns and the kingdom needs saving again next Tuesday.

This is not burnout. Burnout is a fire that goes out. This is something else—a fire that keeps burning at a low, steady grade. You are not exhausted. You are calibrated. You care so precisely that it's invisible.

At 4:15, you close the terminal. Tomorrow the peer will be up. The carrier will splice the fiber. You will go to work and no one will mention the outage because the outage did not, in any way that matters to anyone but you, happen. And the next time—because there will be a next time—you will do it again. Not because you are heroic. Because you are the person who is here.

The void does not answer. But you have stopped expecting it to. The scream is for you—proof that on the fourteenth repetition, in the dark, in the silence between the alert and the resolution, you were still awake.

Lessons learned: none. The lesson was already known. The lesson was built into the system. The system worked. File under: success. Close ticket. Set alarm. Wait.

— INC-2024-0847, internal post-mortem, field 7 of 7, first draft, deleted before submission

The ones who leave

On the things that walk out the door

Sarah's desk is empty on Monday. Not the empty of working from home—the empty of gone. Monitor dark, keyboard centered, no coffee cup. The desk of someone who returned her badge on Friday and did not send a goodbye message because goodbye messages are for people who believe they will be missed by the organization and not just by the three people who sat near them.

She was good. She was the one who knew the DNS infrastructure—not knew about it, but knew it, the way you know the sound of your own car's engine. She could tell you which resolvers were slow by instinct, before the metrics caught up. That knowledge is gone now. It walked out with her badge and her laptop and her muscle memory, and the organization will not notice for approximately six weeks, which is how long it takes for the first problem to arise that only she would have recognized by its shape.

Sarah has moved on to new opportunities. We wish her well!

You find out where she went: a SaaS company, product engineering, "building things instead of keeping things alive." Everyone who leaves says a version of this. They want to build. As if what you do is not building. As if the infrastructure that will run their future product was not built, maintained, and defended by people exactly like the person they used to be.

When an operator leaves, the organization loses two things. The first is the person. The second is every piece of knowledge that the person carried but never wrote down—not because they were lazy, but because the knowledge was not the kind that can be written. It was reflexive, intuitive, contextual. It was the pause before typing a command, the instinct to check a log that the runbook doesn't mention, the feeling in the gut that says this alert is different. You cannot document a gut feeling. You can only lose it.

You've watched others leave. Not one or two—enough to see the pattern. They burn bright for two or three years. They build something good. They get tired of explaining why it matters. They get a recruiter message on LinkedIn from a company that uses words like greenfield and from scratch and you'll have real impact, as if the impact of keeping a system alive for a million users is somehow less real than the impact of building a new one for zero.

They leave. Their desk goes quiet. Their monitoring dashboards get reassigned to someone who does not yet know what normal looks like on those graphs. And you stay. Not because you are less ambitious. Because someone has to remember why the firewall rule on port 8443 exists, and that someone is now you, and only you, and the weight of that knowledge is the weight of the organization's history sitting on the shoulders of whoever didn't leave.

You stay. You absorb another colleague's institutional memory. You add it to the pile. The pile never gets lighter. It only gets more yours.

The system doesn't know she's gone. The packets don't care. But the next incident will take forty-five minutes instead of fifteen, and nobody will know why.

— private thought, Monday morning, staring at an empty desk, not written down until now

Part III — The language

The incident report

On the form that shapes the narrative

There is a form. There is always a form. INC-2024-0291, Severity 2, Duration 47 minutes. The form has fields, and the fields have labels, and the labels contain assumptions about how systems fail that have not been examined since the form was designed by someone who has never been in an outage.

Root Cause (singular). As if complex systems fail for one reason. As if the outage was caused by A, and not by A in the context of B, exacerbated by C, missed by D, and prolonged by E. The form wants a root cause. You give it one. You pick the cause that is most legible, most actionable, most likely to result in a task that someone will actually complete. You do not pick the real root cause, which is that the system has been accumulating technical debt for two years and the monitoring coverage is 60% of what it should be and the on-call rotation is understaffed by one person, because those are not root causes that fit in a text field. Those are conditions. And the form does not have a field for conditions.

Root cause: Misconfigured health check threshold caused premature failover.

Impact: measured in minutes. Forty-seven minutes of degraded service. Not measured: the operator's cortisol spike at 3 a.m. Not measured: the context switch cost of the two engineers pulled off their sprint work. Not measured: the forty-five minutes of post-incident documentation. Not measured: the two hours of meetings that will follow. The form measures impact the way a scale measures a storm: it tells you the weight of the rain but nothing about the wind.

Lessons Learned: the field where truth goes to die. You know what you learned: that the team is too small, that the monitoring is too sparse, that the person who configured the health check was not the same person who understood the failure mode it was meant to detect. You write instead: Improve health check configuration review process. Add integration test for failover scenarios. Action items. Assignable. Trackable. Closeable. The truth is not closeable. The truth is a condition, not a task.

The incident report is not a record of what happened. It is a translation of what happened into a language that the organization can process. And like all translations, it loses something. What it loses is the texture: the fear, the improvisation, the moment when you tried something that wasn't in the runbook and it worked, the moment when you tried something that was in the runbook and it didn't. The report preserves the skeleton. The muscle and nerve are stripped away.

You file the report. You assign the action items. In six weeks, someone will close them, and the incident will be "resolved" in the way that incidents are resolved: not by fixing the conditions that produced them, but by addressing the symptoms that the form was designed to capture. The conditions will remain. The next incident will have a different trigger and the same root cause, and the form will ask for a root cause (singular), and you will provide one, and the cycle will continue, and the form will never ask the question that matters: what is this system's condition?

Because that question does not fit in a text field.

Root cause: the form asked for one answer and the system had seven.

— deleted from an incident report during peer review, replaced with something actionable

Blameless

On the weight of a word that carries nothing

The word sits at the top of the template in bold: BLAMELESS. As if typography could do what culture cannot. As if the font weight of a heading could redistribute accountability the way a load balancer redistributes traffic—evenly, automatically, without anyone having to decide who carries what.

You have been in blameless post-mortems where someone's face went red. Where the timeline was recited in a tone that was not accusatory but was also not not accusatory. Where "what could we have done differently" was answered with "followed the change process," which is a sentence that has no subject but everyone in the room knows who the implied subject is.

Blameless doesn't mean no one is responsible. It means responsibility is redistributed from the person to the process. The human who pushed the bad config becomes "the change management process that allowed an unreviewed config to reach production." This is meant to be systemic thinking. Often, it is. Sometimes, it is a way of saying the same true thing in a voice so passive that accountability dissolves before it reaches any particular person.

The blameless post-mortem was invented to solve a real problem: engineers who are afraid of blame will hide mistakes, and hidden mistakes kill systems. The solution—remove the blame, keep the analysis—is sound. But somewhere between the principle and the practice, "blameless" became a way of distributing responsibility so evenly that it became no one's. And a responsibility that belongs to no one is a responsibility that will not be carried.

The hardest post-mortems are the ones where you did everything right. Where the runbook was followed, the alert fired on time, the response was within SLA, and the outage still lasted forty-seven minutes because the system has a failure mode that the runbook doesn't cover and the monitoring doesn't detect and the architecture doesn't prevent. Where the action item is not "don't do that again" but "redesign this subsystem," which is a six-month project that requires budget that requires a business case that requires—here it is again—an outage that was bad enough to justify the spend.

You sit in the blameless meeting and you are not blamed and you are not absolved and you are not given the resources to prevent the next one. You are given an action item. You close it in six weeks. The condition persists. The word blameless sits at the top of the next template, bold, waiting.

Blameless is the right idea. You believe this. But the word has been worn smooth by overuse, like a stone in a river, until it means nothing more than we will have this meeting and no one will raise their voice. The not-raising of voices is not the same as the understanding of systems. The quiet room is not the same as the safe room. And the form—always the form—asks for action items, because action items can be closed, and closed items look like progress, and progress is what the organization needs to see in order to believe that the thing that happened will not happen again.

It will happen again. The action items will be closed when it does.

Blameless: adjective. Meaning: the blame has been evenly distributed to a thickness that is no longer visible to the naked eye.

— a definition that will never appear in any official glossary

Part IV — The long haul

The thing you built

On outliving your own relevance

Someone pings you about a routing policy you wrote three years ago. They do not know you wrote it. They do not know anyone wrote it—to them, it is simply the configuration, a thing that exists the way a wall exists, without apparent authorship. They want to know why it strips a specific BGP community. You know why. You remember the afternoon you added it, the analysis that preceded it, the satisfaction of a clean solution to a messy problem involving a peering partner who was leaking routes with a community tag that caused suboptimal path selection in a region they didn't even serve.

You don't say any of this. You say: "Yeah, I think that was added to handle a community leak from the peering partner. There should be a comment in the config." There is a comment in the config. You put it there. It says: Strip 65000:100 per INC-2022-0044. Six words. The entire story compressed into a ticket number and a directive. The person who pinged you will read the comment, understand the what, never know the why in full, and move on. The policy will continue to do its work, silently, correctly, carrying no trace of the thought that produced it.

There is a particular feeling that comes from seeing your work running in production long after you've stopped thinking about it. It is not pride, exactly—pride requires an audience. It is closer to the feeling of recognizing your own handwriting in an old notebook: a quiet verification that you were here, that you thought about this, that the thought was good enough to survive without you.

The systems you build will outlive your relationship to them. This is the strange contract of operations work: you pour attention into a thing, and if you do it well, the thing becomes self-sustaining—not in the sense that it maintains itself, but in the sense that it can be maintained by someone who has never met you, who knows nothing about your intentions, who will interact with your work purely through the artifacts you left behind: the config, the comment, the ticket number, the policy that strips the community tag for reasons that are now historical and will eventually be forgotten entirely.

You will not be there when it is forgotten. You will not be there when someone, years from now, looks at the policy and wonders why it exists, and removes it because it seems unnecessary, and discovers—three weeks later, in a Sev-2 incident at 3 a.m.—that it was necessary after all. You will not be there, but the thing you built will be, and its absence will be felt, and no one will know to credit you for its presence or blame you for its removal.

This is the afterlife of infrastructure: your work survives, anonymous, until it doesn't.

Strip 65000:100 per INC-2022-0044.

— a config comment that is the only remaining evidence of an elegant solution, an afternoon's work, and a career's worth of context

Passing the pager

On the things you cannot hand over

You hand them the pager. Not literally—it is an app now, a notification pipeline, a chain of webhooks and escalation policies—but the gesture is ancient. You are passing a burden to someone who does not yet know its weight. You remember receiving it yourself, years ago, from someone whose name you can still recall and whose expertise you have spent your career trying to match.

"Here's the runbook," you say, and you open the wiki page, and you watch them read it, and you realize in real time how much of what you know is not in the runbook. The runbook says check BGP peer status. It does not say but first, glance at the weathermap, because if US-East is yellow and the peer that's down is in Virginia, it's probably the same carrier issue from last quarter and you can skip straight to the carrier status page. It does not say if the alert fires between 2 and 4 a.m. on a Tuesday, it's probably the batch job and the peer will re-establish on its own. It does not say trust your gut when the metrics look fine but the dashboard feels wrong.

These are not documentable things. These are reflexes. Built over years. Ground into the nervous system by repetition. You cannot hand someone a reflex. You can only hand them the phone and hope that they will build their own.

Training a replacement is the act of discovering the boundary between knowledge and instinct. Everything on one side of that boundary can be transferred: procedures, credentials, escalation paths, the location of the config files, the name of the contact at the carrier NOC. Everything on the other side—the pause before typing a command, the instinct to check a log that the runbook doesn't mention, the ability to hear the difference between a healthy system and a quiet one—stays with you when you leave.

You shadow them for two weeks. You watch them work through their first incident and you do not intervene, even when they take the longer path, because the longer path is how you learn the short one. You answer their questions, and you notice that the questions they ask are not the questions that matter—the questions that matter are the ones they don't know to ask yet, and those will come at 3 a.m., alone, when the runbook ends and the instinct hasn't started and the only thing between them and the outage is the willingness to be uncertain and keep typing.

They will be fine. You know this because you were them, once, and you were fine. Not immediately. Not gracefully. But eventually, the way everyone in this profession eventually becomes competent: by accumulating enough 3 a.m. failures that the nervous system rewires itself around the work, and the work becomes reflex, and the reflex becomes the person.

You hand them the pager. They take it. It is lighter than they expected. It will get heavier.

The runbook covers the first 80%. The other 20% is you, at 3 a.m., making it up with good judgment. I can't give you the judgment. I can only give you the 3 a.m.s.

— said once, to a new hire, over coffee, never repeated because it sounded too much like a warning

Part V — And still

Go fuck yourself

Why can't I say that?

You can't, of course. You know you can't. You have known you can't since approximately the third month of your first job, when a senior engineer pushed a config change to production at 4:55 p.m. on a Friday without a change ticket, without a peer review, without so much as a message in the channel, and when the alerts started firing twenty minutes later and you were the one on call, and you traced it back to his commit and pinged him, he replied—from what was clearly a bar—"Yeah I figured it'd be fine."

You wanted to say it then. The words assembled themselves with the mechanical precision of a round chambering. Go fuck yourself. Three words. One syllable, one syllable, two syllables. Iambic, almost. It is the most efficient error message in the English language—a complete status report delivered in under a second. I have evaluated your behavior. I have found it unacceptable. I have no further feedback at this time.

You didn't say it. You said: "Let's hop on a call and take a look."

The professional register is a compression algorithm. It takes a feeling with high entropy—rage, contempt, disbelief—and compresses it into a lossy format that preserves the information but strips the signal that would make anyone actually hear it.

You learn the translations early. A parallel grammar that runs alongside your actual thoughts, like simultaneous interpretation at the United Nations, except both speakers are you and one of them is lying.

"Are you fucking kidding me?"

"Can you walk me through the decision to deploy without a CR?"

"This is the dumbest thing I've ever seen."

"I have some concerns about this approach."

"You are going to break production."

"Have we considered the failure modes here?"

"I told you this would happen."

"This aligns with the risk we flagged in the design review."

"I don't trust you to operate a light switch."

"Maybe we should add a runbook for this workflow."

"Go fuck yourself."

"Thanks for the context. Let me take a closer look."

Look at that last one. It took a three-word sentence containing a complete emotional truth and turned it into a sentence that communicates nothing. The professional version doesn't just remove the profanity. It removes the you. The person who caused the problem has been grammatically absolved.

Corporate communication is not a dialect. It is an amnesty program. Every sentence is structured to distribute responsibility so evenly that it becomes no one's. Mistakes were made. By whom? Lessons were learned. By whom? The language doesn't distinguish because the language was designed not to.

So you draft. And you redraft. Each revision removes something true and replaces it with something safe.

The deployment you pushed without approval took down monitoring for the entire eastern region. I've been cleaning this up for two hours. This is the third time this quarter. This needs to stop.

A deployment was pushed without a change record, which resulted in monitoring degradation in the eastern region. The issue has been remediated. Going forward, it would be helpful to follow the CR process.

Hi team, wanted to flag that a change went out this afternoon without a CR, which caused some monitoring issues in US-East. Everything's been resolved. As a reminder, all production changes should go through the standard change process so we can track impact and coordinate across teams. Happy to chat more if helpful. Thanks!

Draft one, which is the truth, would have been called aggressive. Draft two would have been called passive-aggressive. Draft three, which is a lie—a fluent, carefully constructed lie that removes your anger, your exhaustion, and your right to be frustrated from the historical record—draft three is called collaborative.

There is a word for the feeling of watching yourself translate your own competence into someone else's comfort. The word is professionalism. It is the most expensive thing you do. It costs you a little piece of accuracy every time you use it, and you can never bill for it.

"As a reminder, all production changes should go through the standard change process."

"Low-priority process reminder, safe to skim."

"If you do this again, the next outage is yours to fix at 3 a.m., and I will not answer the page."

The gap between the first and third is the tax you pay for professionalism. You cannot use a tool designed to remove sharpness and then be surprised when nothing cuts.

You think about this in the shower, which is where you think about everything that matters, because the shower is the only room in your life that doesn't have a Slack notification.

Go fuck yourself would communicate, in that moment: I am a person with fifteen years of experience, and your casualness about the consequences is an insult to every hour I have spent ensuring that those consequences don't reach you. All of that. Three words. Under a second.

Instead you'll say: "That's an interesting idea. Maybe we should run it through staging first?"

And they will hear: "She's being cautious again."

That's professionalism. It's a load-bearing wall. It holds the building up. Nobody thanks the wall.

You close the laptop. Tomorrow they will read your email and say "good reminder, thanks!" and they will mean it, and that will be the worst part—that the distance between what you wrote and what you meant is so total that your anger arrived as a pleasantry and was received as one.

The void is full of draft ones.

Go fuck yourself.

Per my last email.

— the most violent sentence in the English language, delivered daily, with a signature block and a legal disclaimer

Moments of clarity

Why do I do this? · rough draft — do not circulate

It comes at strange times. Not during the outage—during the outage you are machinery. Not during the meeting—during the meeting you are armor. It comes after. In the in-between spaces. In the car, in the shower, at 2 a.m. when you're technically asleep but not really because some part of your brain is always listening for the vibration pattern that means you are needed.

The question arrives whole: Why do I do this?

Not why does someone do this—that question has answers, institutional ones. Competitive salary. Meaningful impact. Those are answers for someone. They are not answers for you, specifically, at this specific hour. The question is: why do I—this particular person, with this particular brain, who could be doing a dozen other things that would never require me to understand what a route reflector does—why do I keep choosing this?

The emphasis matters. The "I" in quotes is not rhetorical decoration. It is a genuine interrogation of the self that has been built, layer by layer, around a set of skills that most people don't know exist. You are asking: is this thing I've become the thing I chose, or the thing that happened to me while I was choosing something else?

You could list the grievances. Four hundred and seventy-seven chapters of them. The 3 a.m. pages. The meetings. The emails you rewrite three times. The cycle. The repetition. The void.

And yet. Here you are. Chapter whatever. Still here.

The moments of clarity are not revelations. They are small. They are technical. They are the reason you stay.

You're tracing a packet path through a topology you built eighteen months ago. Six hops. Four policy evaluations. Two encapsulations. And it works. Not works like it doesn't crash—works like a sentence that parses, like a proof that resolves, like a piece of music where the voices converge on a chord you set up eight bars ago. You set it up. You knew it would land here. And it did.

That feeling. There is no word for it. It is closer to what a watchmaker feels when the movement runs true—a private verification that the thing you built is coherent, that the complexity you hold in your head corresponds to a reality that functions.

You wake up before the alarm. Because you were dreaming about the problem. The DNS resolution issue that's been intermittent for three weeks. In the dream, you saw it: the TTL on the cached response masking a retry storm that only manifests on Saturdays. You get up. You open the laptop. You're right. You are right. And the feeling is the feeling of a pattern completing.

The page comes in and you fix it in ninety seconds. Not because you're fast—because you're ready. Because you've been ready for this specific failure for two years. And afterward, in the silence, there is a feeling that is the opposite of loneliness—not because someone is with you, but because you are exactly where you are supposed to be.

The work is not drudgery interrupted by occasional crises. The work is a continuous act of comprehension. When the model predicts the failure, when the design absorbs the shock, when the packet takes the path you intended—that is not just competence. That is a form of knowing. And the knowing is the thing. The knowing is the whole thing.

You do it because the systems are beautiful. Beautiful the way a river delta is beautiful: complex, self-organizing, shaped by forces that are individually simple and collectively incomprehensible, and yet somehow, if you look at it long enough, coherent.

It is worth it in the way that any practice is worth it: because the practitioner and the practice have become the same thing, and to stop would not be to quit a job but to stop being the person you have become.

Don't revise this. Don't professionalize it. Don't compress it into something safe. This is the draft that's true.

You do this because the void isn't empty. The void is full of packets traversing paths you designed, and configurations you wrote, and failures you prevented, and failures you survived.

The 3 a.m. fix was true. The Saturday dream was true. The packet that took the right path was true. You were there. You saw it. That's a witness. That's enough.

The question is not why do I do this. The question, asked honestly, is could I stop? And the answer—felt in the body, in the quickening when the alert fires, in the calm when the system converges—the answer is no. Not because you are trapped. Because you are this. The practice and the practitioner. The system and the operator. The question and the answer in the same breath.

You open the terminal. The cursor blinks. The system is waiting.

You are here.

I do it because the knowing is the thing. The knowing was always the thing.

— rough draft, unsaved, written in a text editor with no filename, the morning after the page that didn't need a post-mortem

Afterword: Uptime

This book was written the way the operator works: in between. Between incidents. Between meetings. Between the alert and the resolution. It was not planned as a book—it was planned as nothing, because operators don't plan to write things down. They plan to fix things, and the writing is what happens in the space after the fix and before the next page.

The chapters are numbered non-sequentially because the events between them were not worth writing about. Or they were, and there wasn't time. Or there was time, and there weren't words. Most of what an operator knows never becomes text. It stays in the body, in the hands, in the half-second glance at a dashboard that tells you everything is fine or everything is about to not be.

If you are reading this and you are an operator, you already know everything in this book. You have lived it. These are your chapters too, numbered differently, but the same.

If you are reading this and you are not an operator, now you know what the green light costs.

The missing chapters

The other four hundred and sixty-seven chapters are still in the terminal, in the Slack threads, in the post-mortems, in the 3 a.m. silences. They were never written down.

They didn't need to be. The operator was there.